For speech service, we was tried microsoft-cognitiveservices-speech-sdk but we hits a Webpack problem, then we decide to use REST API for all Azure API call instead. There is no Azure Open AI JavaScript SDK at this moment. Play the audio right before the parent model update call.įor text input, it is very similar, but the trigger event is model on tap and skips step 1 and 2. Call the startRandomMotion method of the model object, and it adds lipsync actions according to the voice level.

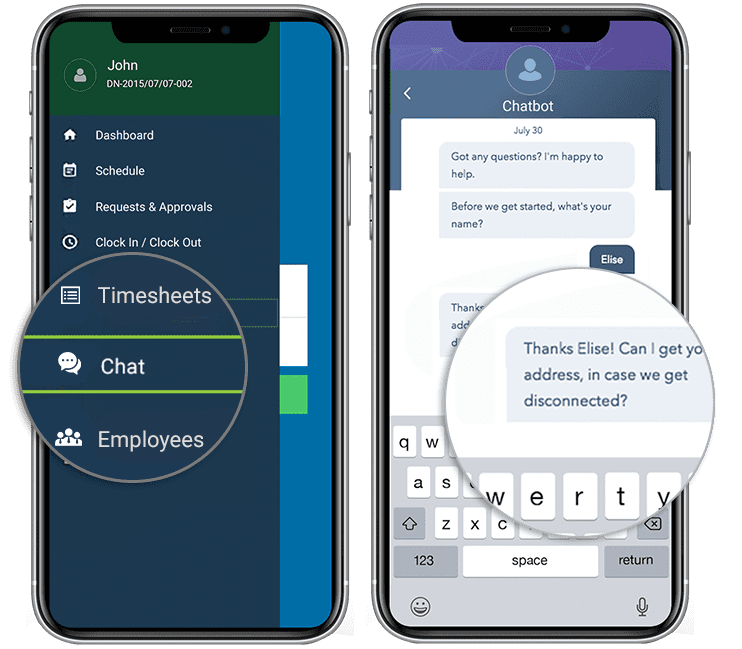

With the wav data from Azure Text-to-Speech, it calls wavFileHandler loadWavFile method which sample the voice and get the voice level.Since Azure Text-to-Speech for short audio does not support webm format and getTextFromSpeech converts webm to wav format with webm-to-wav-converter. They are getTextFromSpeech, getOpenAiAnswer, and getSpeechUrl. The startVoiceConversation chains down to different Live2D objects from main.ts, LAppDelegate, to LAppLive2DManager which makes a series of Ajax call to Azure Services through AzureAi class.When user click on the red dot again, it stops capturing mic input, and call startVoiceConversation method with language and a Blob object in webm format.When user click on the red dot, it starts capturing mic input with MediaRecorder.We fork and modify Cubism Web Samples from Live2D which is a software technology that allows you to create dynamic expressions that breathe life into an original 2D illustration.īehind the scene, it is a TypeScript application and we hack the sample to add ajax call when event happens. This application is just a simple client-side static web with HTML5, CSS3, and JavaScript. Click on the red dot again to indicate your speech is completed, and it will response to your speech. For text input, type in the “Prompt” text box, click on the avatar, and it will response to your prompt!įor voice input, select your language, click on the red dot, and speak with your Mic.Edit the config file with your own endpoint and API key.Click on “Sample” link and download the json config file.Create “Text to speech” resources, and you can follow “ How to get started with neural text to speech in Azure | Azure Tips and Tricks”.For chatbot use case, you can pick “text-davinci-003”. Create OpenAI model deployment, then note down the endpoint URL and key, and you can follow the “ Quickstart: Get started generating text using Azure OpenAI”.You need to have an Azure Subscription with Azure Open AI and Microsoft Cognitive Services Text to Speech services.Please note the current version changes to upload a json config file instead of input 4 text fields.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed